AI Agents vs Agentic AI: What’s the Difference and Why Does It Matter?

Here's the frustrating reality: most articles that claim to explain the difference between AI agents and agentic AI end up conflating them anyway. The distinction isn't just semantic. It reflects a fundamentally different philosophy about what autonomous software should do, how far it should go, and who or what remains in charge.

If you're making decisions about AI adoption, whether you're a developer, a product lead, or an executive trying to separate genuine capability from marketing noise, understanding this difference is one of the most practical things you can do right now.

So let's break it down clearly, without the hype.

Starting at the Source: Clear Definitions

Before the comparison, you need a firm footing on what each term actually means.

AI Agent

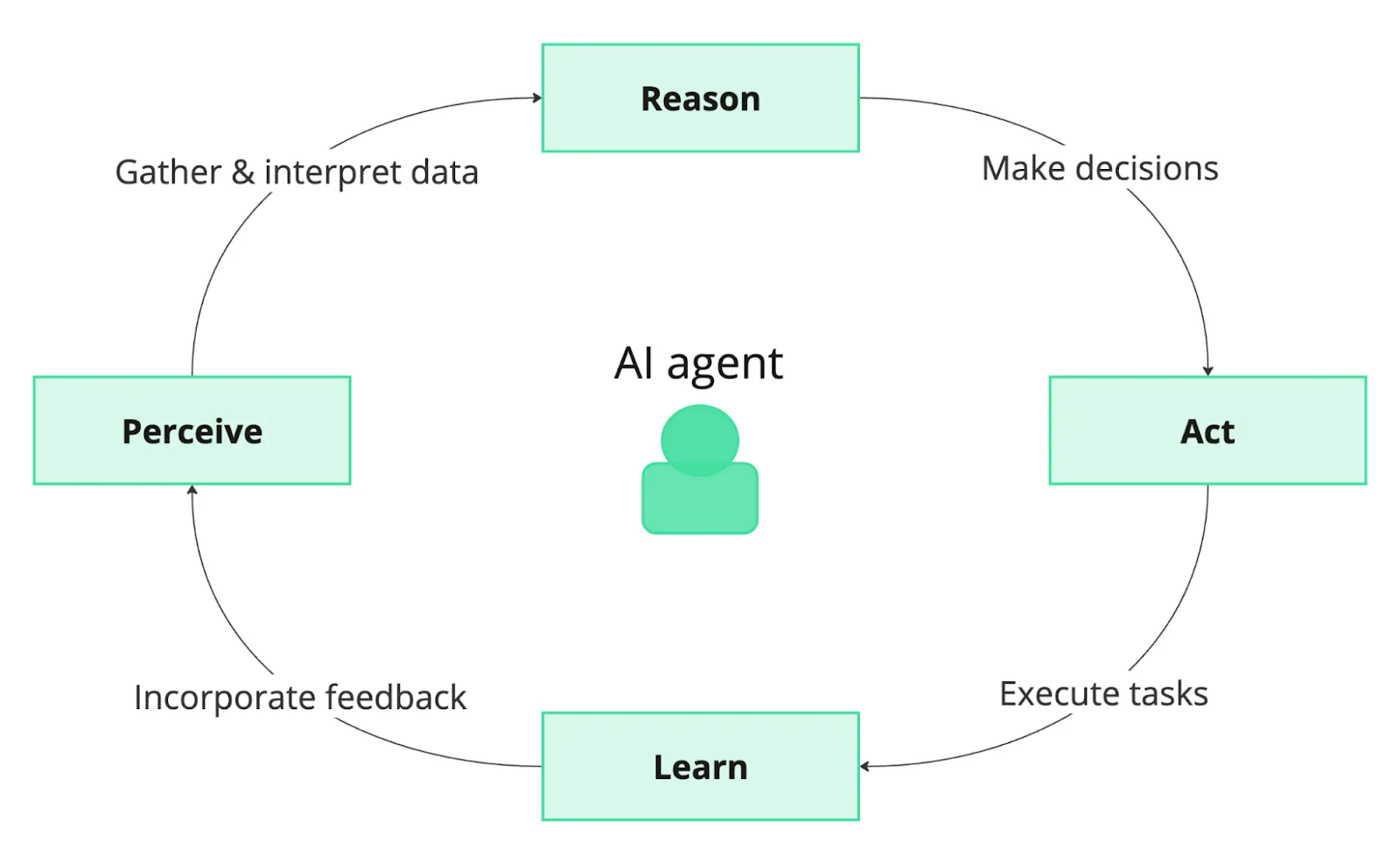

An autonomous software entity designed to execute a specific, bounded task within a defined environment. It perceives inputs, takes action, and repeats, but within tight parameters. They are goal-oriented, reactive systems that perform constrained operations, akin to “digital employees” executing specialized functions. Common examples include scripted chatbots, developer Copilots, and industrial automation bots.

Agentic AI

A higher-order system characterized by broad autonomy, adaptive planning, and the ability to coordinate multiple agents toward complex, multi-step goals. They act as “digital partners” or orchestrators that coordinate multiple AI agents towards complex, overarching objectives, functioning across dynamic, multi-system environments.

Under the Hood: How These Systems Are Actually Built

Architecture reveals intent. The way a system is built tells you everything about what it was designed to do, and what it isn't designed to handle.

The Five Types of AI Agents

AI agents aren't monolithic. They fall along a spectrum of complexity, from purely reactive systems to sophisticated learners:

- Simple Reflex Agents: Operate on hardcoded rules with no memory. They react to the current input only — nothing more. A robot vacuum that turns when it hits a wall fits perfectly here.

- Model-Based Reflex Agents: Maintain an internal representation of the world, which lets them function reasonably well even when they can't see the whole picture.

- Goal-Based Agents: Know where they need to end up and use planning and search to get there. They're not just reacting, they're navigating toward an objective.

- Utility-Based Agents: Go one step further, when multiple paths lead to the goal, they pick the optimal one using a utility function to weigh trade-offs.

- Learning Agents: The most sophisticated of the individual agent types. They improve through experience, using feedback loops to continuously refine their performance over time. This is the foundation that agentic systems build on.

How Agentic AI Is Architecturally Different

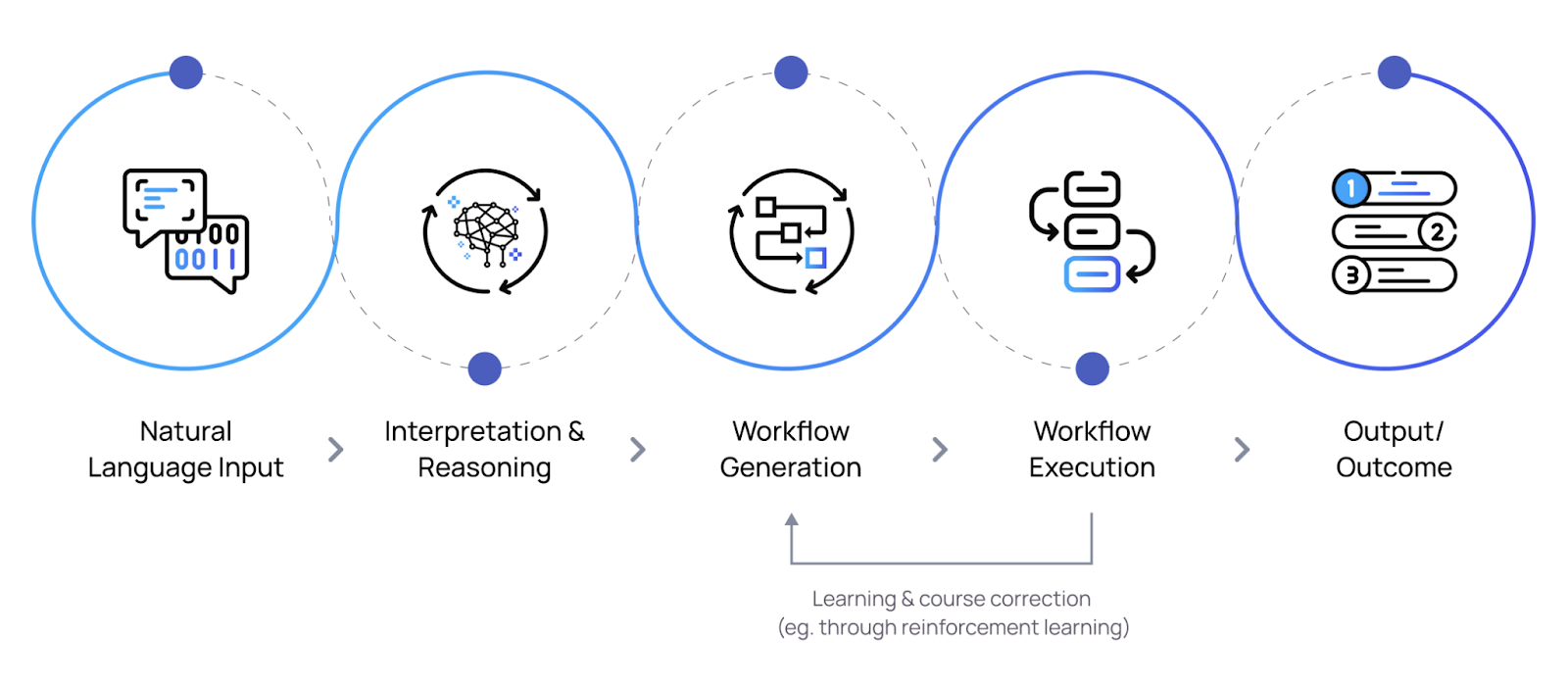

Agentic AI doesn't replace these agent types, it absorbs them into a larger structure. A typical agentic architecture includes a high-level planner (often powered by a large language model), persistent memory systems, integrated tools and APIs, and a coordination layer that routes tasks to specialized sub-agents and synthesizes their outputs.

This is where LLMs become genuinely transformative rather than just impressive. When a language model sits at the orchestration layer of a multi-agent system, it can interpret ambiguous instructions, decompose complex goals, and make real-time decisions about which agent handles what. That's a fundamentally different kind of system than a standalone chatbot.

Side-by-Side: The Honest Comparison

Here's where the distinction becomes concrete. These aren't just philosophical differences, they have direct implications for how you build, deploy, and govern AI systems.

The takeaway isn't that one is better than the other. It's that they solve different problems. Deploying agentic AI for a task that a well-scoped agent could handle is costly overkill. Relying on a simple agent to manage a complex, dynamic workflow is a recipe for failure.

Real-World Applications

Theory is one thing. Seeing these systems at work in production environments is where the value crystallizes.

AI Agents in Action

- Autonomous Vehicles: Sensor fusion and navigation decisions in real time — highly specialized, extraordinarily precise within a defined domain.

- Gaming NPCs: Non-player characters that adapt their behavior to player actions, creating believable and responsive game worlds.

- Algorithmic Trading: Agents that monitor markets and execute transactions at speeds and frequencies no human trader can match.

- Scripted Virtual Assistants: Customer-facing bots that handle FAQs, schedule appointments, and route requests within known conversation flows.

Agentic AI in Action

- Contact Center Orchestration: Dynamically routing, escalating, and synthesizing across multiple specialized agents to resolve complex customer issues end-to-end.

- Supply Chain Management: Autonomous adjustment of inventory, logistics, and procurement across distributed systems in real time, at scale.

- IT Operations (AIOps): Multi-agent monitoring that detects anomalies, diagnoses root causes, and initiates remediation without human initiation.

- Scientific Research AI: Systems that generate hypotheses, design experiments, analyze results, and feed learnings back into the next iteration — autonomously.

The Risks Nobody Talks About Honestly

Elevated autonomy means elevated stakes. The more independent a system is, the more carefully you need to think about what happens when it goes wrong and it will, eventually.

Technical Failures

Hallucinations, inconsistent memory across sessions, and cascading errors when one agent in a network produces flawed outputs that others act on uncritically.

Security Vulnerabilities

Adversarial prompt injection, misuse of granted tool access, and the challenge of auditing behavior in systems with emergent, non-deterministic outputs.

Legal & Accountability Gaps

Who is liable when an autonomous system makes a decision that causes harm? Current legal frameworks weren't designed for agents that act independently at scale.

Hype and "Agent Washing"

Vendor overclaiming leads to misaligned expectations, botched deployments, and genuine erosion of trust, both in specific products and the category broadly.

Workforce Displacement

The transition from task automation to autonomous decision-making raises legitimate questions about roles, skills gaps, and the social contract around work.

Opacity and Trust

The more capable a system becomes, the harder it is to explain its reasoning. Black-box decisions in high-stakes contexts erode the trust needed for real adoption.

None of these risks mean you should avoid these technologies. They mean you should approach them with your eyes open, with governance frameworks, monitoring infrastructure, and clear accountability chains in place before you go to production.

Common Pitfalls and How to Avoid Them

The organizations that stumble with AI deployments tend to make the same few mistakes. Here's what those look like up close.

- Poor Data Hygiene

Low-quality, inconsistent, or biased training data doesn't just degrade performance, it embeds systematic errors into a system that will then act on those errors autonomously. No amount of architectural sophistication rescues a model trained on bad data.

- No Operational Oversight Plan

Shipping to production without a clear monitoring strategy, intervention protocol, or ownership structure is like handing someone car keys with no road rules. Autonomous systems need human checkpoints, not just at launch but continuously.

- Overpromising Capabilities

Stakeholder trust is fragile. Setting expectations that the technology can't meet, particularly with the "agentic" label, leads to disillusionment that can set back entire programs. Under-promise, over-deliver, and show incremental value.

A Practical Deployment Framework

- Diagnose before you build

Is your problem best solved by a narrow, task-specific agent or a broader orchestration system? This question deserves a real answer, not a default to whatever is newest.

- Start with a pilot, not a platform

Phase your rollout. A contained pilot generates real performance data, surfaces edge cases, and builds organizational fluency before you're committed at scale.

- Align AI initiatives to organizational goals — explicitly

Generic AI adoption for its own sake rarely delivers lasting value. Tie your AI architecture decisions to specific business outcomes, and define how you'll measure them.

- Build governance before you need it

Transparency, accountability structures, sandboxing, and throttling mechanisms should be designed in from the start, not retrofitted after an incident.

Frequently Asked Questions

Can an AI agent become agentic AI just by adding more capabilities?

Not really. The distinction isn't just about the number of features, it's about architectural design and the role the system plays. An agent that gains more tools is still an agent if it operates within a fixed task scope and requires external orchestration. Agentic AI implies a different design philosophy: the system itself does the planning, coordination, and adaptation.

Do AI agents learn and improve on their own?

Some do, within constraints. Learning agents, the most advanced category of individual agents, improve through feedback and experience. But that learning typically stays within the boundaries of their defined task. Agentic AI, by contrast, adapts its strategies dynamically across multiple tasks and contexts in real time, drawing on a broader pool of experience.

Which is right for my business — an AI agent or agentic AI?

The right answer depends entirely on the problem you're solving. If you have a well-defined, repetitive task that needs automation at speed and scale, a properly scoped AI agent will likely outperform anything more complex, and cost significantly less to operate. If you're dealing with dynamic, multi-step workflows that require cross-system coordination and adaptive decision-making, agentic AI starts to make sense. Most organizations benefit from both, deployed in the right contexts.

How do I identify "agent washing" in vendor claims?

Ask for specifics. What decisions does the system make autonomously versus following a script? Can it handle novel situations outside its training distribution? Does it coordinate sub-processes, or does it execute a single workflow? Vague answers to concrete questions are a reliable signal. Genuine agentic capability is demonstrable — vendors building it can show you exactly how it works.

Why does understanding this distinction matter strategically?

Because misaligning your AI architecture to your actual problem is expensive and demoralizing. Organizations that deploy overpowered, under-governed agentic systems for simple tasks burn money and introduce unnecessary risk. Those that deploy narrow agents for complex coordination problems hit a ceiling fast. The distinction shapes procurement decisions, governance frameworks, talent strategy, and competitive positioning. Getting it right early matters.

%201.png)